Image courtesy of ShutterStock.

When autism first appeared in the "diagnostic bible," the 1980 Diagnostic and Statistical Manual, one of the few criteria for diagnosis was "gross deficits in language development" (APA, 1980). Autism was once associated with:

"marked abnormalities in the production of speech, including volume, pitch, stress, rate, rhythm, and intonation" (1987)

"marked abnormalities in the form or content of speech, including stereotyped and repetitive use of speech" (1987)

"delay in, or total lack of, the development of spoken language." (1994, 2000).

Today, in the most recent version, language disabilities are not even referenced (2013).

So, as the definition of autism expanded, abnormal language development started out as a defining characteristic, then became an optional trait, and now is no longer part of the diagnosis.

This change might seem strange to most people exposed to autism in the media, and even many who know autistic people personally. After all, many autistic children still do not produce spoken language, and some people who speak fluently sound "odd" in their volume, pitch, or even choice of words. So, what is autistic language like? Has it really changed in the past forty-odd years, or have researchers and clinicians just deemed it less essential?

Morton Ann Gernsbacher, Elizabeth Grace, and I tried to find out. We read hundreds of papers on the topic that came out since 2000. These studies examined every imaginable language skill, in every imaginable age group, with every imaginable method. What we learned might surprise you. Details can be found in our book chapter

here, but since it will likely be hard to access, I'm summarizing the important points here.

Autistic Language is Often Delayed.

Studies often find that autistic children develop language more slowly than typically-developing peers, especially when it comes to spoken language. They may be delayed in speaking their first words, first combinations of words (e.g., "blue-car") and first grammatical sentences

1-7.

Parents report that young autistic children say fewer words than age peers

1,8-14. In fact, their concern about late talking often leads them to seek out a diagnosis

15.

Parents also often report autistic children

understand fewer words

1,8,10,11,16-20. However, parents can easily either underestimate or overestimate what a young autistic child knows. If an autistic child responds with atypical words or body language, or does not respond at all, a parent may mistakenly assume the child does not understand. Alternatively, a parent may think a child understands language associated with a routine (e.g., "let's go outside") when the child really only understands the behavior that accompanies it (e.g., taking the child's coat and shoes out of the closet).

Fortunately, there are more objective ways to measure children's language understanding, which involve testing them directly. These include standardized tests such as the Peabody Picture Vocabulary Test (PPVT) and its equivalent, the British Picture Vocabulary Scale (BPVS); the Preschool Language Scale; or the Clinical Evaluations of Language Fundamentals (CELF). Many studies using such tests indicate delays in understanding language, not just speaking

8,9,10,17,20-29.

Autistic Language is Variable.

Some studies do not find any difference between autistic people and age peers

30-34. These studies range from toddlers to adults, and evaluate skills as various as spoken vocabulary, understood vocabulary, and quality and quantity of writing.

Typically, studies find a wide range of performance in autistic groups. The majority are often unimpaired, while a minority may have significant delays

35,36. Some examples:

- One group surveyed parents of a large sample of autistic toddlers with a wide range of IQ scores. Over three quarters of this group said their first words before 18 months, which is within the range of typical development. However, a little over 5% had still not spoken their first words by six years of age--a huge delay.37

- In a group of autistic children and teenagers, half had average receptive vocabulary scores (on the BPVS). One quarter performed one to two standard deviations below average, and another quarter scored over two standard deviations above average.38

Standard scores often range from as low as 4 standard deviations below average to two standard deviations above

28,39-41. Thus, autistic people can rank among the most language impaired--or the most verbally gifted.

When researchers measure the

rate of language development--instead of ability level at a given moment in time--autistic people are similarly variable.

Sometimes, despite lower initial performance, autistic people develop language skills faster, and for longer, than age peers

6,42. Vocabulary may even improve into adulthood

43.

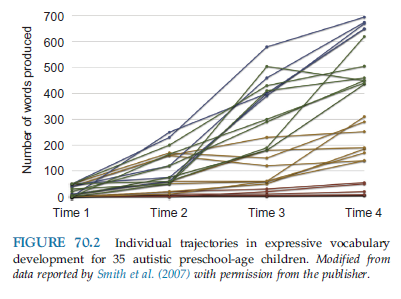

However, different individuals have very different rates of language development. The graph below shows the growth in spoken vocabulary development for 35 autistic preschoolers

44. Parents reported these children's vocabulary (using the MacArthur-Bates Communicative Development Inventory) four times over two years.

All the children started with a spoken vocabulary of fewer than 60 words, but they ended up with very different vocabulary sizes two years later. Those with the steepest growth could say nearly 700 words at the end of the study; another group showed little change at all. All these children were the same age, with similar levels of autistic traits, and similar measured IQ. Interestingly, they were also all undergoing the same interventions--which included speech and language therapy.

Overall, it seems that language development can be slower than normal during the first few years of life

45, but more rapid later on. However, individuals differ so greatly that it would be hard to identify a "typical rate" of autistic language development.

Autistic Language is Similar to that of People with Similar Language Skills.

Psychologists often draw a distinction between "delay" and "deviance." Delay is when a person develops in the same way as others, but does so more slowly. For example, someone with delayed spoken vocabulary might have the vocabulary of the typical two year old at the age of six. Deviance is when a person develops in a qualitatively different way. They might develop a different pattern of skills, or they might develop the same skills in a different order. For example, some people have claimed that autistic people have a unique difficulty understanding nonliteral language, such as metaphors. A person with a disability could, in theory, be delayed, deviant, or both. So, do autistic people have "deviant" language, or are they just more likely to be delayed?

In order to answer this question, we have to compare autistic people to those with similar levels of language development, but who are not autistic. Such comparison groups might include younger typically-developing children, late talkers, or children with specific language impairment. If autistic people are simply delayed, they will learn the same language skills in the same order as these "language-matched" peers.

And in fact, they do.

Autistic children do not have a unique difficulty with learning social or emotional words, or an advantage in learning words associated with special interests. They learn the same words in the same proportion and in the same order as younger typically developing children. For example, they are no less likely to learn words for people or social routines, and no more likely to learn words for vehicles.

1 They also are no less likely to say emotion words

46.

Autistic people also do not have any reliable difficulty with understanding and using nonliteral language, once their general language delays are taken into account. Autistic children in grade school learn to understand metaphors, draw inferences from stories, and structure their own narratives at the same time as language-matched peers

47-52. Their level of language impairment,

not their degree of autistic traits, predicts how much difficulty they have with nonliteral language

47-51.

Autistic children sometimes develop language for a time, then seem to abruptly lose it--this is called "regression." Some people have argued that regression is characteristic of, and unique to, autism.

Regression is hard to define and measure. However, it seems that only a subgroup of autistic children lose language this way. Interestingly, those who lose language were previously experiencing little or no delay

53,54. Language loss also occurs in a seizure disorder called

Landau-Kleffner syndrome.

Some people have claimed that autistic children use language in unique ways--for example, echolalia (repeating what they or others say), or pronoun reversal (e.g., switching "you" for "me" and vice versa). These characteristics appear to occur in only a small minority of autistic children, and are reported less and less frequently now that they are no longer included in the diagnostic manual. They also occur in other disabled groups.

Pronoun reversals do not occur in all autistic children, and they also occur in other populations (see my earlier post

here, and Dr. Jon Brock's

here). Young typically developing children sometimes confuse first- and second-person pronouns for a short time while learning them

55. Children with other developmental disabilities, such as Down Syndrome, also reverse pronouns

56. Like these other groups, autistic children are more likely to have difficulty producing the correct pronouns when using more complex sentences, or complex types of pronouns

57-60. Thus, pronoun reversals may be a normal part of early language development; it simply lasts longer in autistic children and those with Down Syndrome because their language develops more slowly.

Echolalia was once viewed as unique to autism. However, very young typically developing children also produced echolalia--that is, they imitate all or part of the preceding utterance without any change. As they get older, they produce less echolalia

61,62. Similarly, autistic children also may produce less echolalia as their language improves. The majority of children who were reported to have "lost" their autism diagnosis by age nine had once exhibited echolalia, but no longer did so

63. A longitudinal study followed autistic and non-autistic children with language delay and measured their increase in language comprehension. During this time period, both groups made fewer immediate, exact repetitions, and increased their use of mitigated echolalia (e.g., making small changes to the repeated sentence to better express the desired meaning)

64. Echolalia appears to be a stepping stone to full self-generated language, and it may last longer in autistic children when their language develops more slowly.

Some people, after observing young autistic children progress from repeating whole phrases unchanged to self-generated speech, have concluded that autistic children must learn language in an entirely different way than typically developing ones. That is, whereas typically developing children first learn what words mean and then how to put them together, autistic children first learn whole phrases, and only later learn what the words mean and what grammatical rules link them together. As far as I know, this hypothesis has yet to be directly tested with a large group of young autistic children. However, there is also nothing more than anecdotal evidence for it. If every known population learns language in one way, the burden of proof must be very high to show that one group learns it in the opposite way.

What can we conclude about language in autism?

In short:

- Autistic people's language is heterogeneous. Their language ability at any given time ranges from the most delayed to the most advanced possible. Their rate of development ranges from virtually nil44 to nearly ten times that of typically developing peers42.

- Language delays are common in autism.

- Autistic language is "delayed, not deviant." Researchers have yet to identify any characteristics of autistic language that are universal in autism or cannot be found in other groups. Autistic language is not unique, but continuous with typical development and language disabilities.

References

1. Charman, T., Drew, A., Baird, C., & Baird, G. (2003). Measuring early language development in preschool children with autism spectrum disorder using the MacArthur Communicative Development Inventory (Infant Form). Journal of Child Language, 30, 213 -236.

2. Matson, J. L., Mahan, S., Kozlowski, A. M., & Shoemaker, M. (2010). Developmental milestones in toddlers with autistic disorder, pervasive developmental disorder-not otherwise specified, and atypical development. Developmental Neurorehabilitation, 13, 239 -247.

3. Grandgeorge, M., Hausberger, M., Tordjman, S., Deleau, M., Lazartigues, A., & Lemonnier, E. (2009). Environmental factors influence language development in children with autism spectrum disorders. PLoS ONE, 4.

4. Kenworthy, L., Wallace, G. L., Powell, K., Anselmo, C., Martin, A., & Black, D. O. (2012). Early language milestones predict later language, but not autism symptoms in higher functioning children with autism spectrum disorders. Research in Autism Spectrum Disorders, 6, 1194 -1202.

5. Pry, R., Peterson, A. F., & Baghdadli, A. M. (2011). On general and specific markers of lexical development in children with autism from 5 to 8 years of age. Research in Autism Spectrum Disorders, 5, 1243- 1252.

6. Anderson, K., Lord, C., Risi, S., DiLavore, P. S., Shulman, C., Thurm, A., et al. (2007). Patterns of growth in verbal abilities among children with autism spectrum disorder. Journal of Counseling and Clinical Psychology, 75, 594- 604

7. Wodka, E. L., Mathy, P., & Kalb, L. (2013). Predictors of phrase and fluent speech in children with autism and severe language delay. Pediatrics, 131, e1128- e1134.

8. Fulton, M. L., & D’Entremont, B. (2013). Utility of the psychoeducational profile-3 for assessing cognitive and language skills of children with autism spectrum disorder. Journal of Autism and Developmental Disorders, 43, 2460- 2471.

9. Kover, S. T., McDuffie, A. S., Hagerman, R. J., & Abbeduto, L. (2013). Receptive vocabulary in boys with autism spectrum disorder: Cross-sectional developmental trajectories. Journal of Autism and Developmental Disorders, 43, 2696- 2709.

10. Luyster, R. J., Kadlec, M. B., Carter, A., & Tager-Flusberg, H. (2008). Language assessment and development in toddlers with autism spectrum disorders. Journal of Autism and Developmental Disorders, 38, 1426- 1438.

11. Luyster, R., Lopez, K., & Lord, C. (2007). Characterizing communicative development in children referred for autism spectrum disorder using the Mac-Arthur Bates Communicative Development Inventory (CDI). Journal of Child Language, 34, 623- 654.

12.Miniscalco, C., Fra¨nberg, J., Schachinger-Lorentzon, U., & Gillberg, C. (2012). Meaning what you say? Comprehension and word production in young children with autism. Research in Autism Spectrum Disorders, 6, 204- 211.

13. Sandercock, R. K. (2013). Gesture as a predictor of language development in infants at high risk for autism spectrum disorders (unpublished doctoral dissertation). Pittsburgh, PA: University of Pittsburgh.

14. Stone, W. L., & Yoder, P. J. (2001). Predicting spoken language level in children with autism spectrum disorders. Autism, 5, 341 -361.

15. Agin, M. C. (2004). The “late talker”—When silence isn’t golden. Contemporary Pediatrics, 21, 22 -32.

16. Maljaars, J., Noens, I., Scholte, E., & Van Berckelaer-Onnes, I. (2012). Language in low-functioning children with autistic disorder: Differences between receptive and expressive skills and concurrent predictors of language. Journal of Autism and Developmental Disorders, 42, 2181- 2191.

17. Miniscalco et al., 2012

18. Paul, R., Chawarska, K., Cicchetti, D., & Volkmar, F. (2008). Language outcomes of toddlers with autism spectrum disorders: A two year follow-up. Autism Research, 1, 97 -107.

19. Paul, R., Chawarska, K., Fowler, C., Cicchetti, D., & Volkmar, F. (2007). “Listen my children and you shall hear”: Auditory preferences in toddlers with autism spectrum disorders. Journal of Speech, Language, and Hearing Research, 50, 1350- 1364.

20. Vanvuchelen, M., Roeyers, R., & DeWeerdt, W. (2011). Imitation assessment and its utility to the diagnosis of autism: Evidence from consecutive clinical preschool referrals for suspected autism. Journal of Autism and Developmental Disorders, 41, 484- 496.

21. Grigorenko, E. L., Klin, A., Pauls, D. L., Senft, R., Hooper, C., & Volkmar, F. (2002). A descriptive study of hyperlexia in a clinically referred sample of children with developmental delays. Journal of Autism and Developmental Disorders, 32, 3 12.

22. Howlin, P. (2003). Outcome in high-functioning adults with autism with and without early language delays: Implications for the differentiation between autism and asperger syndrome. Journal of Autism and Developmental Disorders, 33, 3 13.

23. Sigman, M., & McGovern, C. W. (2005). Improvement in cognitive and language skills from preschool to adolescence in autism. Journal of Autism and Developmental Disorders, 35, 15 23

24. Wisdom, S. N., Dyck, M. J., Piek, J. P., Hay, D., & Hallmayer, J. (2007). Can autism, language, and coordination disorders be differentiated based on ability profiles? European Child and Adolescent Psychiatry, 16, 178 186.

25. Sutera, S., Pandey, J., Esser, E. L., Rosenthal, M. A., Wilson, L. B., Barton, M., et al. (2007). Predictors of optimal outcome in toddlers diagnosed with autism spectrum disorders. Journal of Autism and Developmental Disorders, 37, 98- 107.

26. Swensen, L. D., Kelley, E., Fein, D., & Naigles, L. R. (2007). Processes of language acquisition in children with autism: Evidence from preferential looking. Child Development, 78, 542 557.

27. Hudry, K., Leadbitter, K., Temple, K., Slonims, V., McConachie, H., Aldred, C., et al. (2010). Preschoolers with autism show greater impairment in receptive compared with expressive language abilities. International Journal of Communication Disorders, 45, 681- 690.

28. Jasmin, E., Couture, M., McKinely, P., Reid, G., Fombonne, E., & Gisel, E. (2009). Sensori-motor and daily living skills of preschool children with autism spectrum disorders. Journal of Autism and Developmental Disorders, 39, 231- 241.

29. Walton, K. M., & Ingersoll, B. R. (2013). Expressive and receptive fast-mapping in children with autism spectrum disorders and typical development: The influence of orienting cues. Research in Autism Spectrum Disorders, 7, 687 -698.

30. Goodwin, A., Fein, D., & Naigles, L. R. (2012). Comprehension of wh-questions precedes their production in typical development and autism spectrum disorders. Autism Research, 5, 109- 123.

31. A°sberg, J. (2010). Patterns of language and discourse comprehension skills in school-aged children with autism spectrum disorders. Scandinavian Journal of Psychology, 51, 534 -539

32. Henderson, L. M., Clarke, P. J., & Snowling, M. J. (2011). Accessing and selecting word meaning in autism spectrum disorder. Journal of Child Psychology and Psychiatry, 52, 964-973.

33. Paul, R., Augustyn, A., Klin, A., & Volkmar, F. R. (2005). Perception and production of prosody by speakers with autism spectrum disorders. Journal of Autism and Developmental Disabilities, 35, 205- 220.

34. Troyb, E. (2011). Academic abilities in children and adolescents with a history of autism spectrum disorders who have achieved optimal outcomes (unpublished master’s thesis, paper 189). Storrs, CT: University of Connecticut.

35. Jones, C. D., & Schwartz, I. S. (2009). When asking questions is not enough: An observational study of social communication differences in high functioning children with autism. Journal of Autism and Developmental Disorders, 39, 432 -443.

36. A°sberg, J., & Dahlgren Sandberg, A. (2012). Dyslexic, delayed, precocious, or just normal? Word reading skills of children with autism spectrum disorders. Journal of Research in Reading, 35, 20 -31.

37. Wilson, S., Djukic, A., Shinnar, S., Dharmani, C., & Rapin, I. (2003). Clinical characteristics of language regression in children. Developmental Medicine & Child Neurology, 45(8), 508 -514.

38. McCann, J., Peppe´, S., Gibbon, F. E., O’Hare, A., & Rutherford, M. (2005). Prosody and its relationship to language in school-aged children with high-functioning autism (working paper WP-3). Queen Margaret University College Speech Science Research Center.

39. Nation, K., Clarke, P., Wright, B., & Williams, C. (2006). Patterns of reading ability in children with autism spectrum disorder. Journal of Autism and Developmental Disorders, 36, 911- 919.

40. Joseph, R. M., McGrath, L. M., & Tager-Flusberg, H. (2005). Executive dysfunction and its relation to language ability in verbal school-age children with autism. Developmental Neuropsychology, 27, 361 378

41. Ricketts, J., Jones, C. R. G., Happe´, F., & Charman, T. (2013). Reading comprehension in autism spectrum disorders: The role of oral language and social functioning. Journal of Autism and Developmental Disorders, 43, 807- 816.

42. Cariello, A., Bigler, E. D., Tolley, S. E., Prigge, M. D., Neeley, E. S., Lange, N, et al. (2011, May). A longitudinal look at expressive, receptive, and total language development in individuals with autism spectrum disorders. Paper presented at the International Meeting for Autism Research, San Sebastian, Spain.

43. Mawhood, L., Howlin, P., & Rutter, M. (2000). Autism and developmental receptive language disorder—A comparative follow-up in early adult life. I: Cognitive and language outcomes. Journal of Child Psychology and Psychiatry, 41, 547- 559.

44. Smith, V., Mirenda, P., & Zaidman-Zait, A. (2007). Predictors of expressive vocabulary growth in children with autism. Journal of Speech, Language, and Hearing Research, 50, 149- 160.

45. Landa, R., & Garrett-Mayer, E. (2006). Development in infants with autism spectrum disorders: A prospective study. Journal of Child Psychology and Psychiatry, 47, 629 -638.

46. Ellis Weismer, S., Gernsbacher, M. A., Stronach, S., Karasinski, K., Eernisse, E. R., Venker, C. E., et al. (2011). Lexical and grammatical skills in toddlers on the autism spectrum compared to late talking toddlers. Journal of Autism and Developmental Disorders, 41, 1065- 1075.

47. Norbury, C. F. (2004). Factors supporting idiom comprehension in children with communication disorders. Journal of Speech, Language, and Hearing Research, 47, 1179- 1193.

48. Norbury, C. F. (2005a). Barking up the wrong tree? Lexical ambiguity resolution in children with language impairments and autistic spectrum disorders. Journal of Experimental Child Psychology, 90, 142- 171.

49. Norbury, C. F. (2005b). The relationship between theory of mind and metaphor: Evidence from children with language impairment and autistic spectrum disorder. British Journal of Developmental Psychology, 23, 383- 399.

50. Norbury, C. F., & Bishop, D. V. M. (2002). Inferential processing and story recall in children with communication problems: A comparison of specific language impairment, pragmatic language impairment and high-functioning autism. International Journal of Language Communication Disorders, 37, 227- 251.

51. Norbury, C. F., & Bishop, D. V. M. (2003). Narrative skills of children with communication impairments. International Journal of Language Communication Disorders, 38, 287- 313.

52. Young, E. C., Diehl, J. J., Morris, D., Hyman, S. L., & Bennetto, L. (2005). The use of two language tests to identify pragmatic language problems in children with autism spectrum disorders. Language, Speech, and Hearing Services in Schools, 36, 62 -72.

53. Baird, G., Charman, T., Pickles, A., Chandler, S., Loucas, T., Meldrum, D., et al. (2008). Regression, developmental trajectory and associated problems in disorders in the autism spectrum: The SNAP study. Journal of Autism and Developmental Disorders, 38, 1827- 1836.

54. Pickles, A., Simonoff, E., Conti-Ramsden, G., Falcaro, M., Simkin, Z., Charman, T., et al. (2009). Loss of language in early development of autism and specific language impairment. Journal of Child Psychology and Psychiatry, 50, 843 -852.

55. Evans, K. E., &

Demuth, K. (2012). Individual differences in pronoun reversal: Evidence from

two longitudinal case studies. Journal of

Child Language, 39, 162-191.

56. Warner, G., Moss, J., Smith, P., & Howlin, P. (2014). Autism

characteristics and behavioral disturbances in ~500 children with Down’s

syndrome in England and Wales. Autism

Research, 7, 433-41

57. Arnold, J. E.,

Bennetto, L., & Diehl, J. J. (2009). Reference production in young speakers

with and without autism: Effects of discourse status and processing

constraints. Cognition, 110, 131-146.

58. Fortunato-Tavares, T., Andrade, C. R., Befi-Lopes, D,. Limongi, S. O.,

Fernandes, F. D., & Schwartz, R. G. (2015). Syntactic comprehension and

working memory in children with specific language impairment, autism or Down

syndrome. Clinical Linguistics &

Phonetics, 29, 499-522

59. Perovic, A.,

Modyanova, N., & Wexler, K. (2013). Comprehension of reflexive and personal

pronouns in children with autism: A syntactic or pragmatic deficit? Applied Psycholinguistics, 34, 813-835.

60. Terzi, A., Marinis,

T., Katsopoulou, A., & Francis, K. (2014). Grammatical abilities of

Greek-speaking children with autism. Language

Acquisition, 21, 4-44.

61. Bloom, L., Rocissano, L., & Hood, L. (1976). Adult-child discourse:

Developmental interaction between information processing and linguistic

knowledge. Cognitive Psychology, 8,

521-552

62. van Santen, J. P. H.,

Sproat, R. W., & Hill, A. P. (2013). Quantifying repetitive speech in

autism spectrum disorders and language impairment. Autism Research, 6, 372-383.

63. Kelley, E., Paul, J.

J., Fein, D., & Naigles, L. R. (2006). Residual language deficits in

optimal outcome children with a history of autism. Journal of Autism and Developmental Disorders, 36, 897-828.

64. Roberts, J. M. A.

(2014). Echolalia and language development in children with autism. In J. Arciuli

& J. Brock (Eds.), Communication in

autism (pp. 55-73). Amsterdam: John Benjamins.